The foundation nobody teaches you to survive the AI era (Part 1)

AI does everything. But if you don't understand what's underneath, you're replaceable. The technical fundamentals that separate those who use AI from those used by it.

Table of Contents

Can you explain why ChatGPT sometimes “hallucinates”?

I’m not asking whether you know it hallucinates. Everyone knows that. I’m asking whether you know the technical mechanism that causes it. Whether you can pop the hood and point exactly where the gears fail.

If the answer is no, I have uncomfortable news: you’re not an AI professional. You’re a user. And at the speed this industry moves, the distance between the two is getting increasingly dangerous.

Think of it this way: everyone knows how to drive a car. But when the engine makes a strange noise, 99% of people take it to the mechanic. The mechanic understands what’s underneath. And in the era of self-driving cars, those who only know how to drive will be replaced by the software. Those who understand the engine will build the software.

It’s the same with AI. Millions of people know how to use ChatGPT, Claude, Gemini. They write prompts, copy answers, paste into code. But when something goes wrong, when the model hallucinates, when costs explode, when latency blows the SLA, they have no idea what to tweak. Because they never learned what’s underneath.

There is a modern technical foundation that separates those who will thrive in this era from those who will be replaced. It’s not a PhD in mathematics. It’s not memorizing papers. It’s specific, practical fundamentals that any developer with discipline can learn.

In this post (Part 1 of 2), I’ll give you the complete map. Today we cover theory: essential mathematics, how LLMs actually work, and data and representations. In Part 2, we’ll go to practice: AI systems engineering, evaluation and observability.

Let’s go.

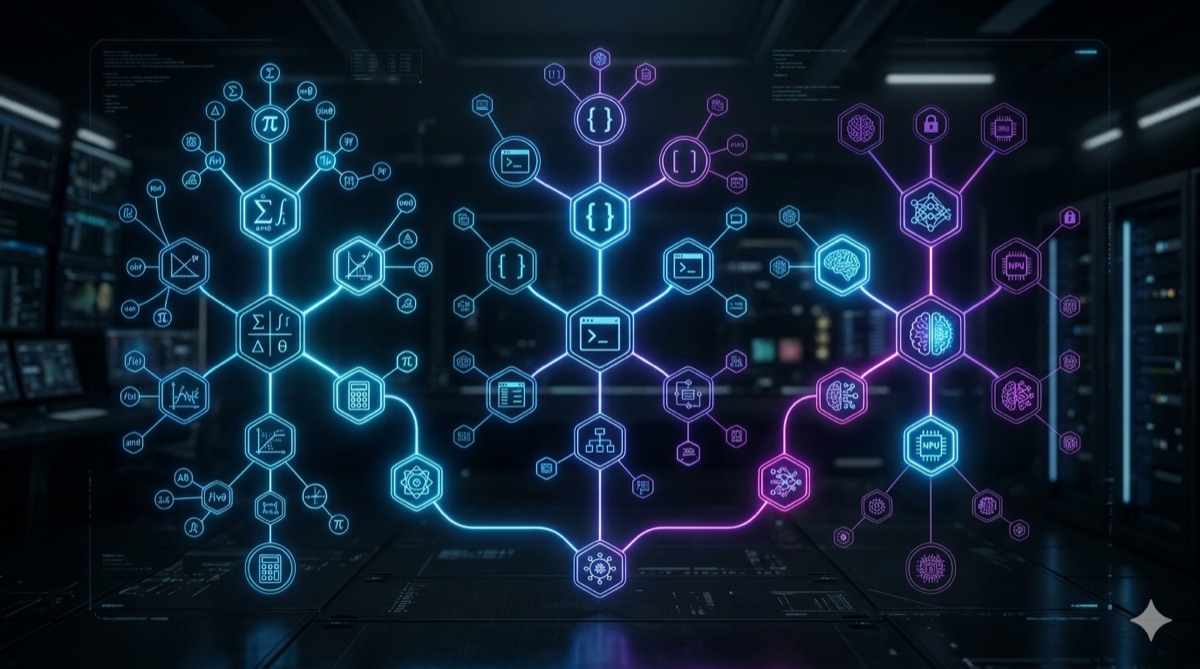

The map: what you actually need to know

Before diving in, I need to show you the complete map. There are five fundamental areas, organized like an RPG skill tree. Each one unlocks the next:

| # | Area | What it covers | Part |

|---|---|---|---|

| 1 | Essential mathematics | Linear algebra, probability, gradients | Part 1 (this post) |

| 2 | How LLMs work | Tokenization, embeddings, attention, generation | Part 1 (this post) |

| 3 | Data and representations | Vector databases, chunking, feature engineering | Part 1 (this post) |

| 4 | AI systems engineering | RAG, agents, guardrails, orchestration | Part 2 |

| 5 | Evaluation and observability | Evals, metrics, monitoring, costs | Part 2 |

The order matters. You can’t understand embeddings without linear algebra. You can’t understand attention without probability. You can’t build RAG without understanding vector databases. Each level is a prerequisite for the next.

The good news: you don’t need to master everything at an academic level. You need functional intuition. Understanding enough to make informed technical decisions, debug real problems, and have productive conversations with those who go deeper.

Essential mathematics: you don’t need a PhD, you need intuition

This is the part that scares most developers. “Math? I became a programmer precisely to escape that.” I get it. I was that person. But the truth is that the math behind modern AI is surprisingly accessible if you focus on what matters and ignore what doesn’t.

Linear algebra: the language of AI

If AI had a native language, it would be linear algebra. Everything an AI model “sees,” “hears,” or “reads” is transformed into vectors and matrices before being processed. Every word, every image, every sound becomes a list of numbers.

A vector is simply an ordered list of numbers. For example, the word “coffee” can be represented as a vector of 1,536 numbers (in OpenAI’s text-embedding-3-small model). Those numbers capture the “meaning” of the word in mathematical space.

The most famous example is word arithmetic. In 2013, researchers at Google (Mikolov et al.) showed that with Word2Vec:

vector("king") - vector("man") + vector("woman") ≈ vector("queen")This works because vectors capture semantic relationships. The “direction” from man to woman is the same as from king to queen. It looks like magic, but it’s pure linear algebra.

The most important concept for day-to-day work is cosine similarity. It measures how much two vectors point in the same direction, regardless of magnitude. This is how semantic search systems find relevant documents, how RAG selects context, and how recommendations work.

import numpy as np

def cosine_similarity(a, b):

return np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))

# Fictional vectors to illustrate

coffee = np.array([0.8, 0.6, 0.1])

cappuccino = np.array([0.75, 0.65, 0.15])

car = np.array([0.1, 0.2, 0.9])

print(cosine_similarity(coffee, cappuccino)) # ~0.99 (very similar)

print(cosine_similarity(coffee, car)) # ~0.38 (not similar)What you need to know: vectors, matrices, dot product, cosine similarity, matrix multiplication.

What you DON’T need: spectral decomposition, eigenvalues/eigenvectors, Hilbert spaces, advanced tensor algebra.

Probability and statistics: the language of uncertainty

LLMs don’t “know” anything. They calculate probabilities. When ChatGPT answers your question, it’s not querying a knowledge base. It’s doing next-token prediction: given all the preceding text, what is the most probable next token?

Formally: P(next_token | all_previous_tokens)

Each time the model generates a token, it calculates a probability distribution over the entire vocabulary (tens of thousands of possible tokens) and picks one. The temperature parameter controls how that choice is made:

- Temperature = 0: always picks the highest-probability token. More “deterministic” and repetitive output.

- Temperature = 1: samples proportionally to probabilities. More varied and “creative.”

- Temperature > 1: flattens the distribution, making unlikely tokens more probable. More chaotic.

The function that transforms the model’s raw scores into probabilities is called softmax:

import numpy as np

def softmax(logits, temperature=1.0):

scaled = logits / temperature

exp_scaled = np.exp(scaled - np.max(scaled))

return exp_scaled / exp_scaled.sum()

logits = np.array([2.0, 1.0, 0.5, 0.1])

print(softmax(logits, temperature=0.5)) # [0.72, 0.18, 0.07, 0.03] - concentrated

print(softmax(logits, temperature=1.0)) # [0.47, 0.17, 0.10, 0.07] - balanced

print(softmax(logits, temperature=2.0)) # [0.34, 0.20, 0.16, 0.13] - spread outSee how low temperature concentrates nearly all probability on the highest-scoring token, while high temperature distributes more evenly. That’s everything you need to know about temperature to make technical decisions day to day.

What you need to know: probability distributions, mean and variance, Bayes’ theorem (intuition, not the formula), softmax, sampling.

What you DON’T need: advanced Markov chains, stochastic processes, formal information theory.

Calculus: just the minimum to understand gradients

This is where most people give up. But I promise: you need exactly ONE concept from calculus to understand how AI models learn. That concept is the gradient.

Think of it this way: you’re on top of a mountain, in total darkness, and need to get down to the valley. You can’t see anything. But you can feel the terrain’s slope with your feet. So you take a step in the steepest downhill direction, feel the slope again, adjust direction, and repeat.

That’s gradient descent. The AI model starts with random weights (position on the mountain), calculates how wrong the answer is (altitude), and adjusts the weights in the direction that reduces error (walks downhill). The gradient is the “slope” that tells which direction to adjust.

The learning rate is the step size. Too large: you leap over the valley and climb the other side. Too small: it takes forever to arrive.

What you need to know: derivative as “rate of change,” gradient as “direction of greatest change,” gradient descent as the learning loop, learning rate as step size.

What you DON’T need: solving integrals, Taylor series, differential equations, backpropagation at the original paper level.

Summary: what to study vs what to ignore

| Topic | Need to know | Can ignore |

|---|---|---|

| Linear algebra | Vectors, matrices, dot product, cosine similarity | Spectral decomposition, eigenvalues |

| Probability | Distributions, Bayes (intuition), softmax | Advanced Markov chains, information theory |

| Calculus | Derivatives, gradients, learning rate | Integrals, series, differential equations |

How LLMs actually work (no bullshit)

Now that you have the mathematical foundation, let’s pop the hood on an LLM. I’ll explain the complete flow, from the prompt you type to the response that appears on screen. No unnecessary jargon, no simplifications that lie.

Tokenization: the first step nobody explains

LLMs don’t read words. They read tokens. A token is a piece of text that can be a whole word, part of a word, a special character, or even a space.

The sentence “Artificial intelligence is fascinating” doesn’t enter the model as 4 words. It’s broken into tokens:

["Art", "ificial", " intelligence", " is", " fascinating"]Why does this matter day to day?

Cost. You pay per token, not per word. And languages like Portuguese generate more tokens than English for the same content (because the tokenizer was trained predominantly on English). I’ve written in detail about the invisible cost of wasted tokens.

Context limits. When a model has “128K tokens of context,” that’s 128K tokens, not 128K words. In most languages, that’s significantly fewer words than it sounds.

Strange behavior. Models sometimes fail at simple character-counting tasks (“how many R’s in ‘strawberry’?”) because they don’t see individual characters, they see tokens.

You can experiment with OpenAI’s tiktoken:

import tiktoken

enc = tiktoken.encoding_for_model("gpt-4")

tokens = enc.encode("Artificial intelligence is fascinating")

print(f"Tokens: {len(tokens)}") # probably 4-5 tokens

print([enc.decode([t]) for t in tokens]) # shows each tokenEmbeddings: from tokens to vectors

Each token is converted into a vector of numbers (an embedding). If the model has dimension 4096, each token becomes a list of 4,096 numbers. Those numbers are the “weights” the model learned during training.

Tokens with similar meaning end up with similar vectors. “Dog” and “canine” are close in vector space. “Dog” and “economics” are far apart.

This conversion from token to vector is the bridge between text (which humans understand) and mathematics (which the model processes). All remaining processing happens in vector space.

In practice, you interact with embeddings all the time:

from openai import OpenAI

client = OpenAI()

response = client.embeddings.create(

model="text-embedding-3-small",

input=["dog playing in the park", "canine running in the garden"]

)

# Two semantically similar texts will have close embeddings

embedding_1 = response.data[0].embedding # 1536-dimension vector

embedding_2 = response.data[1].embeddingWhen you do semantic search, RAG, or text classification with AI, you’re using embeddings under the hood.

Attention: the mechanism that changed everything

In 2017, researchers at Google published the paper “Attention Is All You Need” (Vaswani et al.) and changed the history of AI. The central mechanism of that paper is self-attention.

The idea is elegant: for each token in the sequence, the model calculates how much that token should “pay attention” to each of the other tokens.

Example: in the sentence “The bank is near the river,” how does the model know “bank” refers to a riverbank and not a financial institution? Because the attention mechanism gives high weight to the connection between “bank” and “river.” The context “river” pulls the meaning of “bank” in the right direction.

Without attention, the model would process each token in isolation, like a bag of words. With attention, it understands relationships between tokens, regardless of distance. “The cat that my friend’s neighbor adopted last year escaped” links “cat” to “escaped” even with 10 tokens between them.

What you need to know:

- Self-attention allows each token to “look at” every other token in the sequence

- This is what makes Transformers powerful for understanding context

- The context window (128K, 200K, 1M tokens) is the limit of how many tokens can “see each other” simultaneously

- More context = more attention calculations = more computational cost (quadratic with sequence length)

The generation loop: next-token prediction

Now let’s put it all together. The complete process of generating a response works like this:

| Step | What happens |

|---|---|

| 1. Input | You type “What is the capital of Brazil?“ |

| 2. Tokenization | Text is broken into tokens |

| 3. Embeddings | Each token becomes a vector |

| 4. Attention layers | Tokens interact with each other (dozens of layers) |

| 5. Output | Model produces probabilities for the next token |

| 6. Sampling | A token is chosen (influenced by temperature) |

| 7. Loop | Chosen token is added to sequence, back to step 3 |

The model generates one token at a time. “Brasília” doesn’t appear all at once. It appears as “Bras” + “ília” (or similar, depending on the tokenizer). And for each token, the model runs the entire pipeline again.

This explains something fundamental: hallucinations. The model doesn’t query a database. It generates the most probable token given the context. If the statistical context suggests that “the capital of Brazil is” is usually followed by “Brasília,” it gets it right. But if the statistical pattern is ambiguous or the model never saw enough information about the topic, it generates the most probable token even if it’s wrong. It doesn’t “know” it’s wrong because it has no concept of truth. It has a concept of probability.

Data and representations: garbage in, garbage out (now on steroids)

In the AI era, the saying “garbage in, garbage out” has gained a new dimension. If the data feeding your system is poorly represented, poorly structured, or poorly retrieved, no model in the world will save the result.

Vector databases: the new database

Traditional databases (PostgreSQL, MySQL) are designed for exact search: “give me all users with age > 30.” Vector databases are designed for similarity search: “give me the documents most similar to this question.”

When you store an embedding (a vector of 1,536 numbers) in a vector database and then search, it finds the “closest” vectors using metrics like cosine similarity. This is the foundation of:

- RAG (Retrieval-Augmented Generation): fetching relevant documents to enrich the LLM’s context

- Semantic search: finding results by meaning, not exact words

- Agent memory: storing and retrieving past experiences

The main vector databases you should know:

| Database | Type | Best for |

|---|---|---|

| pgvector | PostgreSQL extension | Those already using Postgres who want simplicity |

| Chroma | Open-source, embedded | Rapid prototyping, smaller projects |

| Weaviate | Open-source, standalone | Production with advanced features |

| Pinecone | Managed SaaS | Those who don’t want to manage infrastructure |

| Qdrant | Open-source, Rust | High performance, large scale |

The most important concept to understand is HNSW (Hierarchical Navigable Small World), the most common indexing algorithm. It creates a hierarchical graph that allows approximate search in sublinear time. It’s not exact search (hence ANN, Approximate Nearest Neighbor), but it’s fast enough for production.

Chunking and context window

LLMs have context limits. Even the latest models with 1 million tokens can’t process an entire knowledge base at once. You need to split your documents into pieces (chunks) and retrieve only the relevant ones.

Three main strategies:

Fixed-size chunking: splits text into pieces of N tokens. Simple, fast, but can cut sentences in the middle and lose context.

Semantic chunking: splits by semantic boundaries (paragraphs, sections, topic changes). Better quality, more complex to implement.

Recursive chunking: tries to split by large boundaries first (sections), and if the chunk is still too big, splits by smaller boundaries (paragraphs, sentences). It’s LangChain’s default and works well in most cases.

Bad chunking is the number 1 cause of poor RAG. If your chunks cut information in the middle, the model receives incomplete context and generates incomplete or wrong answers. I go deeper on this in the post about RAG in practice.

Feature engineering in the embeddings era

Before LLMs, transforming raw data into useful representations for models was manual, artisanal work. You’d create features like one-hot encoding for categories, TF-IDF for text, normalization for numbers.

With embeddings, much of this work is automated. An embedding model transforms text into vectors that capture meaning without you needing to define rules manually.

But be careful: embeddings aren’t a silver bullet. The practical rule is:

- Structured data (tables, numbers, categories): traditional features still work better. XGBoost with good features beats LLMs on many tabular tasks.

- Unstructured data (text, image, audio): embeddings are superior. Use embedding models specific to the data type.

- Mixed data: combine both. Numeric features + text embeddings concatenated in the same pipeline.

This connects directly with what we discussed in the post about AI for everything is a cannon to kill a mosquito: knowing when to use embeddings and when to use traditional features is part of knowing when to use AI and when not to.

Next chapter: from theory to production (Part 2)

Everything we covered so far is theory. The foundation. The base. But knowing theory without knowing how to build is like knowing anatomy without knowing how to operate. You understand the body, but you can’t save the patient.

In Part 2, we head to the operating table:

- AI systems engineering: how to architect RAG in production, orchestrate autonomous agents, implement guardrails that prevent disasters, and decide between fine-tuning and prompting

- Evaluation and observability: how to measure whether your AI actually works (spoiler: “looks good” is not a metric), create consistent evals, monitor costs and latency, and detect degradation before the user complains

Part 2 is coming soon. If you made it this far, you already have a huge advantage over those who only know how to copy prompts.

Conclusion: AI does everything, except think for you

Let’s recap the map:

- Essential mathematics: linear algebra to understand embeddings, probability to understand generation, gradients to understand learning

- How LLMs work: tokenization, embeddings, attention, next-token prediction

- Data and representations: vector databases, chunking, when to use embeddings vs traditional features

These three pillars are what separates those who pilot AI from those piloted by it. AI replaces execution, not comprehension. It generates code, but doesn’t understand why that code is the right choice. It answers questions, but doesn’t know when the answer is wrong. It processes data, but doesn’t know whether the data makes sense.

Those who understand the fundamentals can:

- Diagnose why the model is hallucinating (bad context? wrong chunking? high temperature?)

- Choose the right tool for each problem (LLM? embedding + vector search? traditional algorithm?)

- Optimize costs (reduce tokens, cache embeddings, use smaller models where possible)

- Have productive technical conversations with the team instead of repeating buzzwords

I’ve previously written about why fundamentals matter more than certifications from a philosophical perspective. This post is the practical complement: what exactly those fundamentals are and how to start studying them.

If this post was useful, share it with that dev who thinks knowing how to prompt is enough. They’ll thank you when the hype fades and the fundamentals remain.