Auto Dream: how Claude Code mimics REM sleep to consolidate memory

Claude Code just quietly shipped one of the smartest agent features I've ever seen. It's called Auto Dream, and it works like the human brain during sleep.

Table of Contents

Claude Code just quietly shipped one of the smartest agent features I’ve ever seen.

It’s called Auto Dream.

And the most fascinating part isn’t what it does — it’s how it does it. Because the inspiration comes from a place none of us would expect: the human brain while sleeping.

Let me explain.

The problem: when agent memory becomes noise

A few months ago, Claude Code shipped Auto Memory — a feature that lets the agent write notes to itself between sessions. As you correct the agent (“don’t use that library”, “prefer this pattern”, “the build runs with this command”), it saves those preferences in persistent memory files.

In theory, brilliant. The agent learns from you and improves each session.

In practice? By around session 20, the memory file starts turning into a messy notebook. Contradictions pile up: “use Vitest” on one line, “use Jest” on another. Temporal references lose meaning: “today the deploy broke” — but when was “today”? A week ago? Three months ago? Outdated context mixes with current information. Notes about a feature that was already removed coexist with active architectural decisions.

The result is counterintuitive: the agent starts performing worse precisely because it has too much memory. Noise overwhelms signal.

And the technical limit doesn’t help. MEMORY.md is loaded at the start of each session, but only the first 200 lines or 25KB. If the memory is bloated with junk, the actually important information may fall outside that cutoff.

You know this feeling? It’s like that notebook you carry everywhere but never organize. After months, finding anything in it takes longer than simply remembering from scratch.

How the human brain solves this problem

Before I show you Claude Code’s solution, I need to tell you how nature solved this exact same problem millions of years ago. Because the analogy isn’t poetic — it’s technical.

Days accumulate. Nights consolidate.

During the day, your brain absorbs an absurd amount of information. Every conversation, every email, every line of code you read — everything strengthens synaptic connections in your brain. Neuroscientists Giulio Tononi and Chiara Cirelli, from the University of Wisconsin-Madison, called this the Synaptic Homeostasis Hypothesis (published in Neuron in 2014).

The central idea is elegant: during wakefulness, synapses strengthen indiscriminately. Everything seems important in the moment. But the brain has limited capacity — it can’t keep all connections strong indefinitely. If that happened, the system would saturate and you’d lose the ability to learn new things.

So what does the brain do? It waits until you sleep to do the cleanup.

During sleep — especially in slow-wave phases (NREM) and REM sleep — a process called synaptic downscaling occurs. Weak synapses (irrelevant information, day details that don’t matter) are weakened. Strong synapses (important learnings, meaningful memories) are preserved. The brain literally “prunes” what doesn’t serve, maintaining the ratio between signal and noise.

And the most impressive part: you don’t consciously decide what to keep and what to discard. The process is automatic.

The nightly replay

In 1994, researchers Matthew Wilson and Bruce McNaughton published a revolutionary study in Science. They implanted electrodes in the hippocampus of rats and discovered something extraordinary: during sleep, hippocampal neurons replayed the day’s experiences, in the same sequence, but compressed in time.

Rats that had navigated a maze during the day literally “dreamed” about the maze at night. The same neural activation sequences repeated, but at accelerated speed. The brain was essentially doing a compressed replay of everything that happened, deciding what to transfer to long-term memory.

Think of it as an automatic code review session. The brain reviews the day’s “code,” identifies what’s important, and moves it to production (neocortex). The rest is discarded.

The Tetris effect: unconscious processing

One of the most fascinating studies on this topic came from Robert Stickgold, a researcher at Harvard. In 2000, he published in Science an experiment with amnesic patients — people who couldn’t form new conscious memories.

Stickgold had the patients play Tetris for several hours. Then, as they fell asleep, they reported seeing falling pieces in the state between wakefulness and sleep. Patients who didn’t remember playing Tetris were dreaming about Tetris pieces.

The brain was processing the experience without any conscious participation. Memory consolidation happens in the background, below the radar of consciousness.

The cost of not sleeping

Matthew Walker, director of the Center for Human Sleep Science at UC Berkeley and author of “Why We Sleep,” demonstrated that sleep deprivation can reduce learning capacity by up to 40%. Not because the tired brain processes more slowly — but because without the nightly consolidation cycle, the previous day’s memories haven’t been organized, and the brain doesn’t have “space” to absorb new information.

In other words: without sleep, the brain behaves exactly like Claude Code’s Auto Memory after 20 sessions. Too much accumulated information, zero organization, degraded performance.

Auto Dream: your agent’s REM sleep

Now that you understand how the brain solves this problem, you’ll immediately understand what Auto Dream does. Because the analogy is almost literal.

Auto Dream is a process that runs in the background, without interrupting your work, and does exactly what REM sleep does for the brain:

- Reviews all past session transcripts (up to 900+) — like Wilson & McNaughton’s nightly replay

- Identifies what’s still relevant — like Tononi & Cirelli’s synaptic downscaling

- Removes outdated or contradictory memories — like synaptic pruning during sleep

- Consolidates everything into organized, indexed files — like the hippocampus-to-neocortex transfer

- Replaces vague references like “today” with real dates — like how the brain contextualizes memories with temporal markers

The table below shows how each biological mechanism has a direct equivalent in Auto Dream:

| Human Brain | Claude Code Auto Dream |

|---|---|

| Each day accumulates new synapses | Each session accumulates new memory notes |

| Hippocampus (temporary) transfers to neocortex (permanent) | Session context transfers to MEMORY.md + topic files |

| Synaptic downscaling removes weak connections | Removes outdated and contradictory memories |

| Nightly replay compresses and reprocesses experiences | Reviews and consolidates transcripts from 900+ sessions |

| Tetris effect: unconscious processing | Runs in the background without interrupting your work |

| Sleep deprivation = -40% learning capacity | Without Auto Dream = bloated memory, degraded agent |

| Limited synaptic capacity requires pruning | MEMORY.md limited to 200 lines/25KB requires consolidation |

How it works technically

Auto Dream doesn’t run every session. It has specific triggers:

- Minimum time: at least 24 hours since the last consolidation

- Minimum volume: at least 5 sessions since the last consolidation

- Execution mode: read-only on project code — it only has write permission on memory files

- Conflict protection: uses a lock file to prevent multiple simultaneous executions

- No interruption: runs entirely in the background

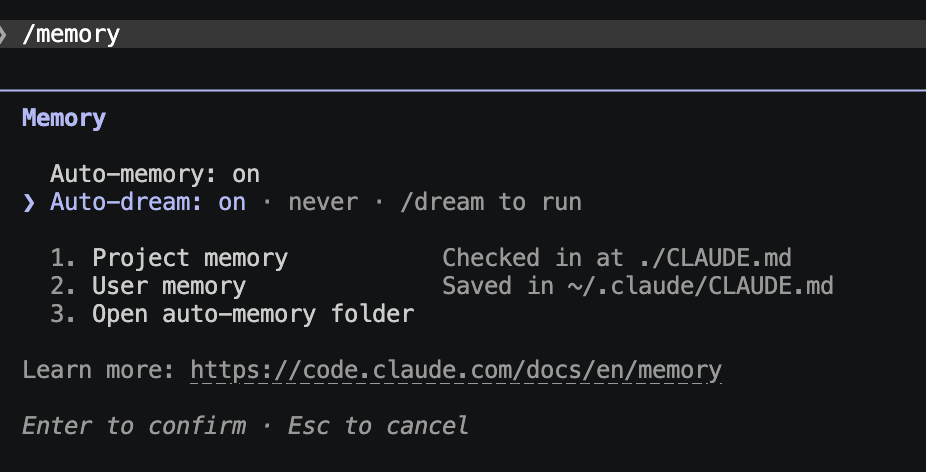

To activate, just run /memory in Claude Code. You’ll see something like:

Memory

Auto-memory: on

> Auto-dream: on · never · /dream to runThe “never” indicates Auto Dream hasn’t run yet. After the first execution, it shows the date of the last consolidation. You can also force a manual execution with /dream.

What changes day to day

The practical difference is significant. Before Auto Dream:

- Session 1-5: agent learns fast, memory is useful

- Session 10-15: memory starts getting noisy, but still functional

- Session 20+: agent starts following contradictory instructions, loses efficiency

- Session 50+: memory is more noise than signal, nearly useless

With Auto Dream:

- Session 1-5: same behavior

- Session 10-15: Auto Dream runs for the first time, consolidates and cleans

- Session 20+: memory stays clean and organized

- Session 50+: agent has a refined body of knowledge, no junk

- Session 100+: the agent genuinely improves over time

This fundamentally changes the agent’s value curve. Instead of degrading with use, it improves. Code preferences, debugging patterns, team conventions, build commands, architectural decisions — all consolidated, organized, indexed.

For those working on long-running projects, this is transformative. The agent stops being a disposable assistant that forgets everything and becomes a partner that evolves alongside the project.

The trend: agents modeled after biology

What strikes me most about Auto Dream isn’t the feature itself — it’s the pattern it reveals.

We’re increasingly modeling AI agents after biological mechanisms:

- Teams of sub-agents that mimic human organizational structures — one agent plans, another implements, another reviews, like an engineering team

- Episodic memory that mimics how humans remember past experiences — not as raw data, but as narratives with context

- And now, agents that “dream” to consolidate memory — exactly like the human brain does during REM sleep

This isn’t coincidence. Biological evolution had millions of years to optimize information processing systems. When AI engineers face the same problems (information accumulation, need to filter noise, learning consolidation), they end up converging on analogous solutions.

The best AI tools in 2026 aren’t about bigger context windows. They’re about smarter memory management. A 1-million-token context window doesn’t help if 80% is noise. Auto Dream understood this.

Suggestion: Auto Dream + mcp-graph

If you follow my work, you know I maintain mcp-graph — an open-source tool that transforms PRDs into persistent execution graphs for structured AI development.

mcp-graph operates in an 8-phase cycle: ANALYZE, DESIGN, PLAN, IMPLEMENT, VALIDATE, REVIEW, HANDOFF, and LISTENING. Each phase feeds the next, and the graph records everything.

When I saw Auto Dream, I immediately thought: what if mcp-graph could consume the memories consolidated by Auto Dream?

Imagine an additional phase — or an evolution of the LISTENING phase — called Memory Graph:

- Auto Dream consolidates memories from 100+ sessions into organized files

- mcp-graph ingests those files and maps relationships between memories in the knowledge graph

- Architectural decisions, recurring patterns, fixed bugs — all connected as nodes in the graph

- The agent doesn’t just remember — it understands the structure of what it learned

This would be a layer of metacognition: the agent looks at its own consolidated memories and extracts high-level patterns. “In the last 3 months, 70% of bugs were related to input validation” — this kind of insight only emerges when you have organized memory AND a graph that connects everything.

In neuroscience, this has a direct parallel. The neocortex doesn’t just store memories — it connects them in semantic networks. You don’t remember isolated facts; you remember relationships between facts. The Memory Graph would do exactly that for the agent.

If you’re interested in this kind of integration, the project is open on GitHub: github.com/DiegoNogueiraDev/mcp-graph-workflow. Issues and contributions are welcome.

Conclusion

Auto Dream is one of those features that seems simple on the surface but reveals a profound shift in how we think about AI agents.

We’re no longer just expanding context windows or adding more tools. We’re giving agents something that until now was exclusive to biological systems: the ability to dream in order to learn better.

Tononi, Cirelli, Walker, Stickgold — these researchers spent decades understanding why the brain needs to sleep. The answer, simplified, is: because accumulating information without organizing it is a recipe for collapse. Sleep is the maintenance mechanism that allows the brain to keep learning indefinitely.

Auto Dream is Claude Code’s REM sleep. And I suspect that soon, every serious agent will have something equivalent.

To try it out, open Claude Code and run /memory. Auto Dream is already there, waiting to consolidate what the agent has learned about you and your project.

Want more tips like this about applied AI, agents, and development tools? Follow me to stay updated.

References:

- Tononi, G. & Cirelli, C. — “Sleep and the Price of Plasticity: From Synaptic and Cellular Homeostasis to Memory Consolidation and Integration” (Neuron, 2014)

- Wilson, M. A. & McNaughton, B. L. — “Reactivation of hippocampal ensemble memories during sleep” (Science, 1994)

- Stickgold, R. et al. — “Replaying the game: Hypnagogic images in normals and amnesics” (Science, 2000)

- Walker, M. — “Why We Sleep: Unlocking the Power of Sleep and Dreams” (2017)

- Stickgold, R. — “Sleep-dependent memory consolidation” (Nature, 2005)

- Official Claude Code Memory documentation: https://code.claude.com/docs/en/memory